White Paper on Introduction to cloud computing

COURTESY :- vrindawan.in

Wikipedia

Cloud computing is the on-demand availability of computer system resources, especially data storage (cloud storage) and computing power, without direct active management by the user. Large clouds often have functions distributed over multiple locations, each of which is a data center. Cloud computing relies on sharing of resources to achieve coherence and typically uses a “pay as you go” model, which can help in reducing capital expenses but may also lead to unexpected operating expenses for users.

Advocates of public and hybrid clouds claim that cloud computing allows companies to avoid or minimize up-front IT infrastructure costs. Proponents also claim that cloud computing allows enterprises to get their applications up and running faster, with improved manageability and less maintenance, and that it enables IT teams to more rapidly adjust resources to meet fluctuating and unpredictable demand, providing burst computing capability: high computing power at certain periods of peak demand.

According to IDC, the global spending on cloud computing services has reached $706 billion and expected to reach $1.3 trillion by 2025. While Gartner estimated that the global public cloud services end-user spending forecast to reach $600 billion by 2023. As per Mc Kinsey & Company report, cloud cost-optimization levers and value-oriented business use cases foresees more than $1 trillion in run-rate EBITDA across Fortune 500 companies as up for grabs in 2030. In 2022, more than $1.3 trillion in enterprise IT spending is at stake from the shift to cloud, growing to almost $1.8 trillion in 2025, according to Gartner.

The term cloud was used to refer to platforms for distributed computing as early as 1993, when Apple spin-off General Magic and AT&T used it in describing their (paired) Telescript and Personal Link technologies. In Wired’s April 1994 feature “Bill and Andy’s Excellent Adventure II”, Andy Hertzfeld commented on Telescript, General Magic’s distributed programming language:

“The beauty of Telescript … is that now, instead of just having a device to program, we now have the entire Cloud out there, where a single program can go and travel to many different sources of information and create a sort of a virtual service. No one had conceived that before. The example Jim White [the designer of Telescript, X.400 and ASN.1] uses now is a date-arranging service where a software agent goes to the flower store and orders flowers and then goes to the ticket shop and gets the tickets for the show, and everything is communicated to both parties.

During the 1960s, the initial concepts of time-sharing became popularized via RJE (Remote Job Entry); this terminology was mostly associated with large vendors such as IBM and DEC. Full-time-sharing solutions were available by the early 1970s on such platforms as Multics (on GE hardware), Cambridge CTSS, and the earliest UNIX ports (on DEC hardware). Yet, the “data center” model where users submitted jobs to operators to run on IBM’s mainframes was overwhelmingly predominant.

In the 1990s, telecommunications companies, who previously offered primarily dedicated point-to-point data circuits, began offering virtual private network (VPN) services with comparable quality of service, but at a lower cost. By switching traffic as they saw fit to balance server use, they could use overall network bandwidth more effectively. They began to use the cloud symbol to denote the demarcation point between what the provider was responsible for and what users were responsible for. Cloud computing extended this boundary to cover all servers as well as the network infrastructure. As computers became more diffused, scientists and technologists explored ways to make large-scale computing power available to more users through time-sharing. They experimented with algorithms to optimize the infrastructure, platform, and applications, to prioritize tasks to be executed by CPUs, and to increase efficiency for end users.

The use of the cloud metaphor for virtualized services dates at least to General Magic in 1994, where it was used to describe the universe of “places” that mobile agents in the Telescript environment could go. As described by Andy Hertzfeld:

“The beauty of Tele script,” says Andy, “is that now, instead of just having a device to program, we now have the entire Cloud out there, where a single program can go and travel to many different sources of information and create a sort of a virtual service.

The use of the cloud metaphor is credited to General Magic communications employee David Hoffman, based on long-standing use in networking and telecom. In addition to use by General Magic itself, it was also used in promoting AT&T’s associated Persona Link Services.

In July 2002, Amazon created subsidiary Amazon Web Services, with the goal to “enable developers to build innovative and entrepreneurial applications on their own.” In March 2006 Amazon introduced its Simple Storage Service (S3), followed by Elastic Compute Cloud (EC2) in August of the same year. These products pioneered the usage of server virtualization to deliver IaaS at a cheaper and on-demand pricing basis.

In April 2008, Google released the beta version of Google App Engine. The App Engine was a PaaS (one of the first of its kind) which provided fully maintained infrastructure and a deployment platform for users to create web applications using common languages/technologies such as Python, Node.js and PHP. The goal was to eliminate the need for some administrative tasks typical of an IaaS model, while creating a platform where users could easily deploy such applications and scale them to demand.

In early 2008, NASA’s Nebula, enhanced in the RESERVOIR European Commission-funded project, became the first open-source software for deploying private and hybrid clouds, and for the federation of clouds.

By mid-2008, Gartner saw an opportunity for cloud computing “to shape the relationship among consumers of IT services, those who use IT services and those who sell them and observed that “organizations are switching from company-owned hardware and software assets to per-use service-based models” so that the “projected shift to computing … will result in dramatic growth in IT products in some areas and significant reductions in other areas.

In 2008, the U.S. National Science Foundation began the Cluster Exploratory program to fund academic research using Google-IBM cluster technology to analyze massive amounts of data.

In 2009, the government of France announced Project Andromède to create a “sovereign cloud” or national cloud computing, with the government to spend €285 million. The initiative failed badly and Cloud watt was shut down on 1 February 2020.

In February 2010, Microsoft released Microsoft Azure, which was announced in October 2008.

In July 2010, Rack space Hosting and NASA jointly launched an open-source cloud-software initiative known as Open Stack. The Open Stack project intended to help organizations offering cloud-computing services running on standard hardware. The early code came from NASA’s Nebula platform as well as from Rack space’s Cloud Files platform. As an open-source offering and along with other open-source solutions such as Cloud Stack, Ganeti, and Open Nebula, it has attracted attention by several key communities. Several studies aim at comparing these open source offerings based on a set of criteria.

On March 1, 2011, IBM announced the IBM SmartCloud framework to support Smarter Planet. Among the various components of the Smarter Computing foundation, cloud computing is a critical part. On June 7, 2012, Oracle announced the Oracle Cloud. This cloud offering is poised to be the first to provide users with access to an integrated set of IT solutions, including the Applications (SaaS), Platform (PaaS), and Infrastructure (IaaS) layers.

In May 2012, Google Compute Engine was released in preview, before being rolled out into General Availability in December 2013.

In 2019, Linux was the most common OS used on Microsoft Azure. In December 2019, Amazon announced AWS Outposts, which is a fully managed service that extends AWS infrastructure, AWS services, APIs, and tools to virtually any customer datacenter, co-location space, or on-premises facility for a truly consistent hybrid experience.

The goal of cloud computing is to allow users to take benefit from all of these technologies, without the need for deep knowledge about or expertise with each one of them. The cloud aims to cut costs and helps the users focus on their core business instead of being impeded by IT obstacles. The main enabling technology for cloud computing is virtualization. Virtualization software separates a physical computing device into one or more “virtual” devices, each of which can be easily used and managed to perform computing tasks. With operating system–level virtualization essentially creating a scalable system of multiple independent computing devices, idle computing resources can be allocated and used more efficiently. Virtualization provides the agility required to speed up IT operations and reduces cost by increasing infrastructure utilization. Autonomic computing automates the process through which the user can provision resources on-demand. By minimizing user involvement, automation speeds up the process, reduces labor costs and reduces the possibility of human errors.

Cloud computing uses concepts from utility computing to provide metrics for the services used. Cloud computing attempts to address QoS (quality of service) and reliability problems of other grid computing models.

Cloud computing shares characteristics with:

- Client–server model—Client–server computing refers broadly to any distributed application that distinguishes between service providers (servers) and service requestors (clients).

- Computer bureau—A service bureau providing computer services, particularly from the 1960s to 1980s.

- Grid computing—A form of distributed and parallel computing, whereby a ‘super and virtual computer’ is composed of a cluster of networked, loosely coupled computers acting in concert to perform very large tasks.

- Fog computing—Distributed computing paradigm that provides data, compute, storage and application services closer to the client or near-user edge devices, such as network routers. Furthermore, fog computing handles data at the network level, on smart devices and on the end-user client-side (e.g. mobile devices), instead of sending data to a remote location for processing.

- Utility computing—The “packaging of computing resources, such as computation and storage, as a metered service similar to a traditional public utility, such as electricity.

- Peer-to-peer—A distributed architecture without the need for central coordination. Participants are both suppliers and consumers of resources (in contrast to the traditional client-server model).

- Cloud sandbox—A live, isolated computer environment in which a program, code or file can run without affecting the application in which it runs.

Cloud computing exhibits the following key characteristics:

- Cost reductions are claimed by cloud providers. A public-cloud delivery model converts capital expenditures (e.g., buying servers) to operational expenditure. This purportedly lowers barriers to entry, as infrastructure is typically provided by a third party and need not be purchased for one-time or infrequent intensive computing tasks. Pricing on a utility computing basis is “fine-grained”, with usage-based billing options. As well, less in-house IT skills are required for implementation of projects that use cloud computing. The e-FISCAL project’s state-of-the-art repository contains several articles looking into cost aspects in more detail, most of them concluding that costs savings depend on the type of activities supported and the type of infrastructure available in-house.

- Device and location independence enable users to access systems using a web browser regardless of their location or what device they use (e.g., PC, mobile phone). As infrastructure is off-site (typically provided by a third-party) and accessed via the Internet, users can connect to it from anywhere

- Maintenance of cloud environment is easier because the data is hosted on an outside server maintained by a provider without the need to invest in data center hardware. IT maintenance of cloud computing is managed and updated by the cloud provider’s IT maintenance team which reduces cloud computing costs compared with on-premises data centers.

- Multitenancy enables sharing of resources and costs across a large pool of users thus allowing for:

- centralization of infrastructure in locations with lower costs (such as real estate, electricity, etc.)

- peak-load capacity increases (users need not engineer and pay for the resources and equipment to meet their highest possible load-levels)

- utilisation and efficiency improvements for systems that are often only 10–20% utilised.

- Performance is monitored by IT experts from the service provider, and consistent and loosely coupled architectures are constructed using web services as the system interface.

- Productivity may be increased when multiple users can work on the same data simultaneously, rather than waiting for it to be saved and emailed. Time may be saved as information does not need to be re-entered when fields are matched, nor do users need to install application software upgrades to their computer.

- Availability improves with the use of multiple redundant sites, which makes well-designed cloud computing suitable for business continuity and disaster recovery.

- Scalability and elasticity via dynamic (“on-demand”) provisioning of resources on a fine-grained, self-service basis in near real-time (Note, the VM startup time varies by VM type, location, OS and cloud providers), without users having to engineer for peak loads. This gives the ability to scale up when the usage need increases or down if resources are not being used. The time-efficient benefit of cloud scalability also means faster time to market, more business flexibility, and adaptability, as adding new resources does not take as much time as it used to. Emerging approaches for managing elasticity include the use of machine learning techniques to propose efficient elasticity models.

- Security can improve due to centralization of data, increased security-focused resources, etc., but concerns can persist about loss of control over certain sensitive data, and the lack of security for stored kernels. Security is often as good as or better than other traditional systems, in part because service providers are able to devote resources to solving security issues that many customers cannot afford to tackle or which they lack the technical skills to address. However, the complexity of security is greatly increased when data is distributed over a wider area or over a greater number of devices, as well as in multi-tenant systems shared by unrelated users. In addition, user access to security audit logs may be difficult or impossible. Private cloud installations are in part motivated by users’ desire to retain control over the infrastructure and avoid losing control of information security.

The National Institute of Standards and Technology’s definition of cloud computing identifies “five essential characteristics”:

On-demand self-service. A consumer can unilaterally provision computing capabilities, such as server time and network storage, as needed automatically without requiring human interaction with each service provider.

Broad network access. Capabilities are available over the network and accessed through standard mechanisms that promote use by heterogeneous thin or thick client platforms (e.g., mobile phones, tablets, laptops, and workstations).

Resource pooling. The provider’s computing resources are pooled to serve multiple consumers using a multi-tenant model, with different physical and virtual resources dynamically assigned and reassigned according to consumer demand.

Rapid elasticity. Capabilities can be elastically provisioned and released, in some cases automatically, to scale rapidly outward and inward commensurate with demand. To the consumer, the capabilities available for provisioning often appear unlimited and can be appropriated in any quantity at any time.

Measured service. Cloud systems automatically control and optimize resource use by leveraging a metering capability at some level of abstraction appropriate to the type of service (e.g., storage, processing, bandwidth, and active user accounts). Resource usage can be monitored, controlled, and reported, providing transparency for both the provider and consumer of the utilized service.

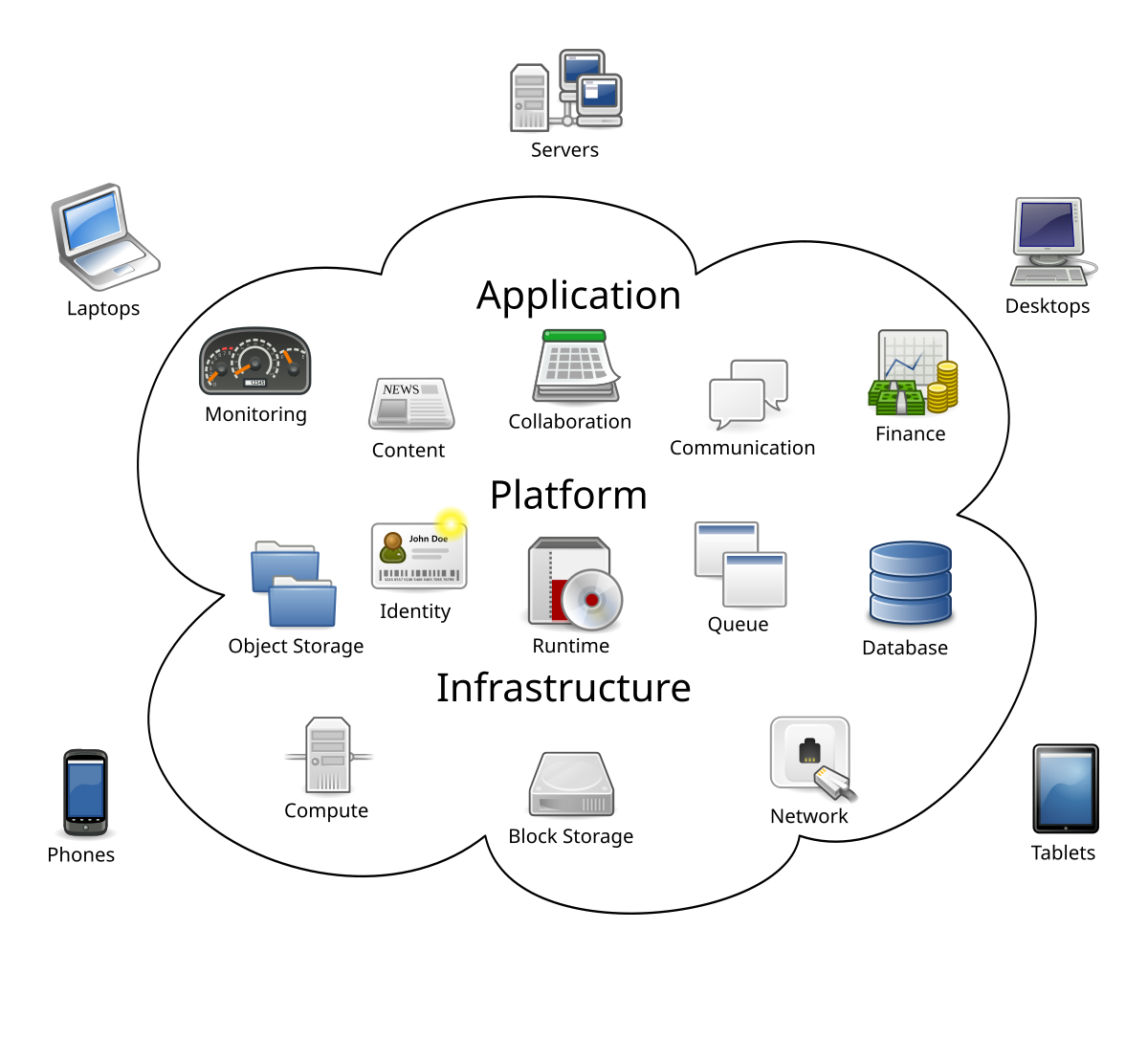

Though service-oriented architecture advocates “Everything as a service” (with the acronyms EaaS or XaaS, or simply aas), cloud-computing providers offer their “services” according to different models, of which the three standard models per NIST are Infrastructure as a Service (IaaS), Platform as a Service (PaaS), and Software as a Service (SaaS). These models offer increasing abstraction; they are thus often portrayed as layers in a stack: infrastructure-, platform- and software-as-a-service, but these need not be related. For example, one can provide SaaS implemented on physical machines (bare metal), without using underlying PaaS or IaaS layers, and conversely one can run a program on IaaS and access it directly, without wrapping it as SaaS.